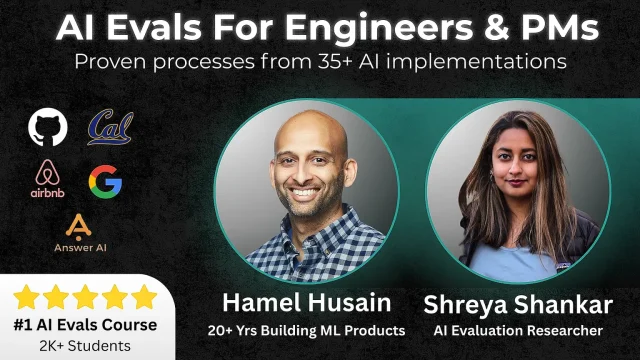

AI Evals For Engineers & PMs – No.1 Course at Maven

AI Evals For Engineers & PMs – No.1 Course at Maven is a highly practical, cohort-based program designed to help engineers and product managers build, implement, and scale evaluation systems for AI products. As AI-powered features become central to modern software, robust evaluation frameworks are no longer optional—they are mission-critical. This course focuses on helping teams ship reliable, measurable, and high-performing AI systems.

Why AI Evaluation Matters

AI systems—especially those powered by LLMs—are probabilistic. Unlike traditional software, they don’t always produce the same output for the same input. That’s where structured evaluation (evals) becomes essential.

This course teaches participants how to:

- Measure model performance beyond simple accuracy

- Detect hallucinations and failure modes

- Create feedback loops for continuous improvement

- Align AI output with product and business goals

For both engineers and PMs, learning how to evaluate AI systems properly reduces production risk and increases product trust.

What You’ll Learn

The course is structured to move from fundamentals to implementation.

1. Foundations of AI Evals

- Types of AI evaluations (offline, online, human-in-the-loop)

- Quantitative vs qualitative evaluation methods

- Designing evaluation datasets

- Defining success metrics for AI products

2. Building Practical Eval Pipelines

- Writing effective test cases for LLM applications

- Automating evaluation workflows

- Using scoring rubrics and structured grading

- Integrating evals into CI/CD pipelines

3. Product-Oriented Evaluation

- Translating business goals into measurable AI metrics

- Prioritizing evals based on product risk

- Balancing speed vs reliability in AI launches

- Communicating evaluation results to stakeholders

4. Continuous Improvement & Monitoring

- Detecting model drift

- Iterative improvement through feedback loops

- Monitoring real-world usage signals

- Scaling eval systems across teams

Who This Course Is For

This course is ideal for:

- AI/ML Engineers building LLM-powered applications

- Backend Engineers integrating AI APIs

- Product Managers responsible for AI features

- Startup Founders shipping AI-first products

Whether you’re launching your first AI feature or scaling an existing system, the frameworks taught here apply directly to production environments.

Course Format & Learning Experience

Being hosted on Maven, the course follows a cohort-based format, which includes:

- Live sessions with structured lessons

- Real-world case studies

- Practical assignments and hands-on exercises

- Peer discussions and collaborative learning

- Direct feedback from instructors

The interactive structure ensures that learners don’t just understand theory—they implement evaluation systems during the program.

Key Benefits

Participants walk away with:

- A repeatable AI evaluation framework

- Clear metrics aligned with product KPIs

- Automated testing strategies for LLM apps

- Reduced hallucination and failure risks

- Increased confidence in deploying AI features

Instead of relying on intuition or manual spot-checking, graduates gain a systematic, scalable approach to AI quality assurance.

Final Verdict

AI Evals For Engineers & PMs – No.1 Course at Maven stands out because it focuses on execution, not hype. In a world where AI products move fast but break easily, evaluation expertise becomes a competitive advantage. For engineers and PMs serious about building reliable AI systems, this course provides the structure, tools, and mindset needed to ship with confidence and scale responsibly.

Download Proof (8.04 GB)